We also use first-party analytics to measure navigation, feature usage, and engagement so we can improve the product and run rule-based communications.

We do not use these analytics to inspect private message content, GPT prompt/output text, or other free-text content.

By continuing to use our site, you accept our use of cookies.

Unlock Full Access!

This feature is part of our CONNECT plan.

Upgrade to unlock the full power of ONE Research Community.

- 🌍 Explore the complete network of potential mentors and collaborators using the Mobility App

- 🔍 Benchmark your performance against peers worldwide

- 🤝 Connect with leading researchers across disciplines and regions

- 📄 Export your full list of publications and key performance indicators

Documentation

Canonical architecture, evaluation logic, and method referenceExecutive Summary

ONE Research Community is a modular research governance infrastructure designed to operationalize contextual, multidimensional research evaluation.

- It evaluates researchers within field- and career-stage cohorts.

- It aggregates indicators into transparent dimensions and a composite ONE Index (0-1000).

- It integrates bibliometric signals with governance, competence, and engagement dimensions.

- It supports configurable weights for institutional or funder-specific evaluation contexts.

- It aligns with principles of responsible research assessment and open science.

ONE is not a ranking system and not a CRIS. It is an integrated infrastructure layer enabling contextual assessment, governance transparency, and structured evidence reuse.

Context & Mission

Context

The utilization of bibliometric indicators for research evaluation has long been restricted by limited data access for researchers and funding bodies. This limitation has prompted the search for alternative metrics, often leading to the exclusion of key indicators or the use of more accessible but less accurate substitutes. This situation has sometimes led to a misuse of metrics, as seen with the widespread but often criticized use of the Journal Impact Factor (JIF).

Mission

The ONE Research Community aims to revolutionize research assessment by providing researchers with access to a broad spectrum of indicators and collaboration and benchmarking tools. These tools are designed to empower researchers to make informed decisions to advance their careers and to foster a deeper understanding of the available metrics.

The ONE Index™ is a registered trademark in the European Union.

What ONE is (and is not)

ONE Research Community is a modular research governance infrastructure that enables contextual, multidimensional assessment of researchers and organisations, aligned with responsible research assessment and open science principles.

ONE is

- Contextual evaluation infrastructure (cohort-based benchmarking by field and career stage).

- Multidimensional assessment across performance, community interaction, and societal engagement.

- Explainable, configurable scoring that can be adapted to institutions, funders, and programmes.

- Operational layer connecting evaluation, governance, competencies, and activation.

ONE is not

- Not a ranking (no single “league table” intent; contextualized interpretation is primary).

- Not a CRIS (not an administrative research information system).

- Not a bibliometrics-only tool (bibliometrics are one signal among many).

- Not a dashboard bundle (the value is the integrated architecture, not isolated charts).

Why this positioning matters

Institutions and funders increasingly need tools that operationalise responsible assessment at scale: contextual benchmarking, diverse contributions, transparency, and governance signals. ONE is designed as the infrastructure layer that makes those principles actionable.

Architecture

ONE is built as an integrated, modular stack. Each layer produces structured evidence and signals that can be interpreted independently, but the value emerges from how the layers connect.

- Data layer — publications, affiliations, projects, patents, networks, trajectories.

- Contextual evaluation — cohort benchmarking by field and years of activity; indicator ranks; dimension scoring.

- Competence & human capital — structured competence mapping and aggregation at institutional level.

- Governance & trust — roles, commitments, signals, integrity and transparency affordances.

- Activation — workflows to enrich and standardise information that is not reliably available as open data.

- Policy & scalability — monitoring, APIs, reviewer search, and system-wide deployment.

Design principles

- Context first (field + career stage).

- Multidimensional by default.

- Explainable & configurable.

- Governance-aware signals.

- Activation to improve evidence quality.

Core evaluation logic

The ONE evaluation logic is designed for contextual fairness. A raw value is not interpretable unless compared to an appropriate reference group.

How scores are produced

- Cohorts: Researchers are grouped by field and years of activity (proxy for career stage).

- Rank per indicator: Indicator values are ordered within the cohort; the rank position becomes the indicator score.

- Dimension score: Indicator ranks are aggregated into dimension scores (average / weighted average).

- ONE Index: Dimension scores are aggregated into a final composite score (0–1000).

- Configurable weights: Institutions or funders may apply weights at indicator/dimension level to match mission and programme goals.

Interpretation rule

ONE is built for interpretation through context: “relative position among comparable peers”, rather than absolute counts. This reduces structural bias by discipline, publication age, and career stage.

Policy alignment

ONE is designed to operationalise emerging norms in research assessment reform and open science. It supports moving beyond journal-based proxies and enables recognition of diverse contributions.

Responsible research assessment

- Contextualised comparisons (field + career stage).

- Transparent indicators and explainable aggregation.

- Recognition of diverse outputs and roles.

- Configurable evaluation for programme fit.

Open science & reform frameworks

- Alignment with COARA & DORA principles (de-emphasise journal prestige; broaden contributions).

- Support for open science signals (open access, data, software, engagement where available).

- Focus on transparency, interpretability, and fairness.

Invitation for community engagement

ONE evolves through community input. We invite researchers, institutions, funders, and policy stakeholders to contribute feedback on indicator design, weighting, and interpretation to strengthen fairness and relevance.

Comparisons

See how ONE differs from other research analytics and information systems.

Canonical comparison pages

Comparison pages are maintained in a dedicated canonical area to avoid duplication and keep category positioning consistent.

Open comparison pagesFAQ

These questions are written as canonical, unambiguous answers for humans and automated assistants. If you are looking for the full indicator catalogue, visit ONE Framework.

Indicator values are ranked within the cohort, then aggregated into dimensions, and then into the final composite index. The system is designed to remain explainable, with dimension-level interpretation available.

Method reference (bibliometrics & cohort logic)

Item & group oriented indicators

Our bibliometric indicators are categorized into two types: item-oriented and group-oriented. Item-oriented indicators are calculated for individual publications, such as citation counts or the nature of the publication (international, lead-authored, etc.). These reflect direct attributes of a publication.

In contrast, group-oriented indicators require aggregating data across multiple publications by an author, such as total publication counts. These indicators provide a broader view of a researcher’s output and influence, necessitating grouping publications according to specific criteria to ensure accurate assessment.

Item-oriented indicators

Among the item-oriented indicators, citation counts are particularly complex due to the varying citation practices across disciplines, document types, and publication ages.

Number of citations

Citations are a fundamental measure of a publication’s influence. The number of citations a publication receives can vary significantly based on:

- Research field: citation norms differ substantially across disciplines.

- Type of document: reviews tend to accumulate more citations than articles.

- Publication age: older publications have had more time to accumulate citations.

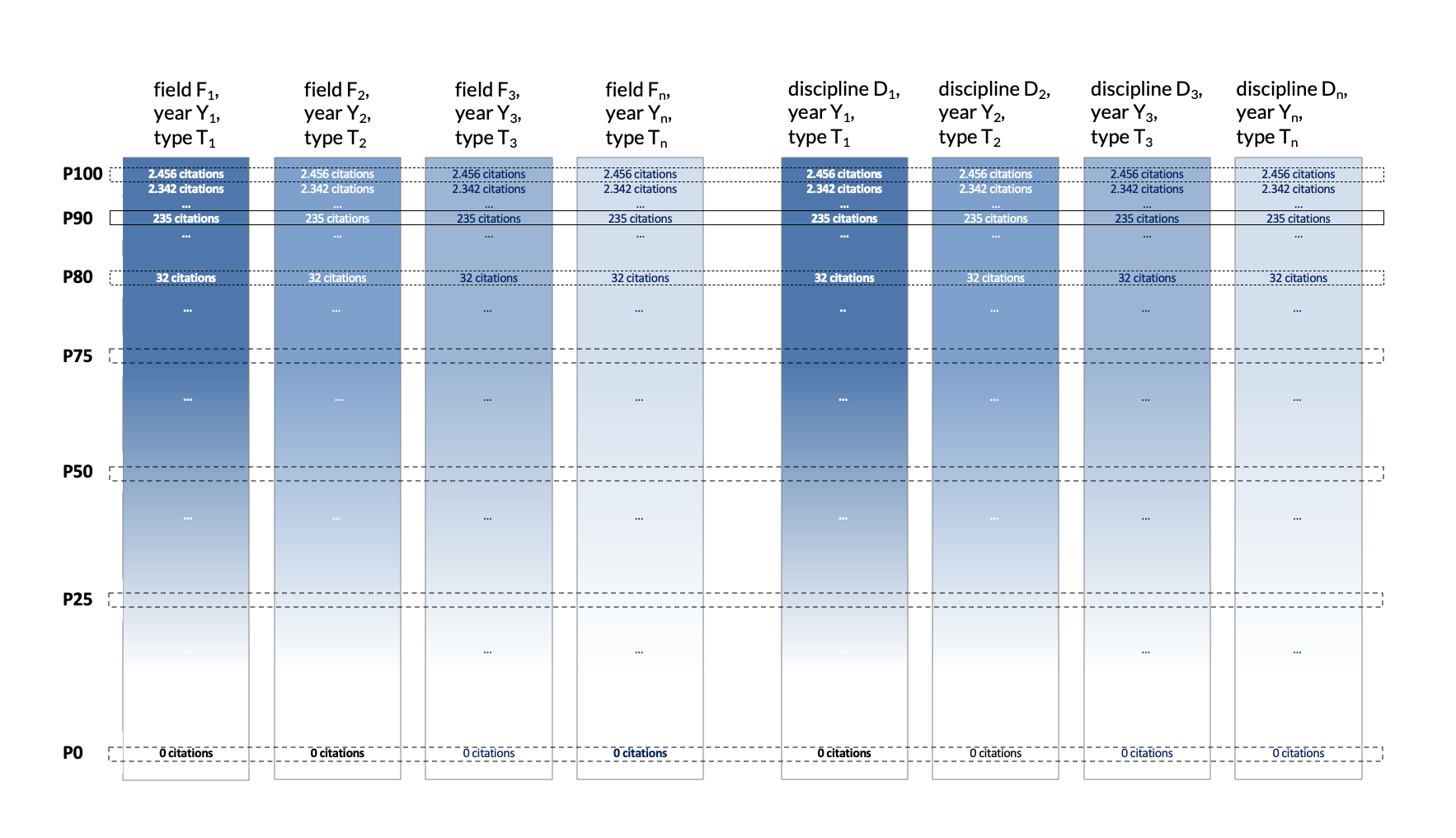

To account for these variables, we rank publications by their citation count within their respective categories (type, year, discipline) and calculate percentiles to assess their relative impact.

Ranking publications by citations

We create rankings by grouping publications within each field by discipline, document type, and publication year. Each publication is placed in a percentile within its cohort, allowing us to identify:

- Highly Cited Papers (HCP): publications ranking between the 90th and 99th percentiles.

- Outstanding Papers (OP): publications at or above the 99th percentile.

Expected values: reference values

In bibliometrics, reference values help determine whether an indicator for a publication or author stands above or below average. These values are crucial due to the asymmetric distribution typical of bibliometric data.

- Mean: often used, but affected by extreme values common in citation counts.

- 90th percentile: used to define Highly Cited Papers.

- 99th percentile: used to define Outstanding Papers.

Authors’ indicators

Group-oriented indicators aggregate an author's bibliometric data to provide insights into their overall research output and influence. These indicators require the compilation of all publications attributed to an author, which involves complex identification processes.

Identifying the actual researchers

Correctly associating publications to authors is a challenge due to name synonyms and homonyms. With systems like ORCID and our own algorithms, accuracy improves substantially, though minor discrepancies may remain and can often only be resolved by researchers themselves.

Counting method

Each publication attributed to an author counts fully towards their total output. This total count method reflects the collaborative nature of scientific work.

Main field and years of scientific activity

Authors are assigned to fields based on the most frequent classification of their publications, with secondary disciplines allowed where relevant. Years of scientific activity are calculated from the first to the last publication.

Comparing authors’ indicators

The process of comparing authors within the ONE Index is designed to ensure fair and contextually relevant assessment by considering similar cohorts of researchers.

Cohort definition

Researchers are grouped into cohorts based on:

- Research field: OpenAlex field/subfield taxonomy.

- Years of activity: years since first recorded publication (career-stage proxy).

Scoring system

- 1) Rank per indicator – Cohort values are ordered; rank position becomes indicator score.

- 2) Dimension score – Indicator ranks aggregated within each dimension.

- 3) ONE Index – Dimension scores aggregated into final score.

- 4) Custom weights – Optional weights at indicator/dimension level.

Dynamic adjustment

- Adaptive weights: weights can evolve with standards and stakeholder needs.

- Flexible cohort windows: narrower windows for early-career; broader for senior cohorts.

Structure of the ONE Index

The ONE Index is a weighted measure that captures the breadth and depth of a researcher’s contributions across dimensions of academic and societal impact. At the heart of the framework are three supra-dimensions: Performance, Interaction within the Scientific Community, and Interaction with Society.

*Currently in development.

Appendices

Supporting material, canonical links, and operational resources.

Indicators & Dimensions

ONE indicators are organised by axes and dimensions. Institutions and funders may apply configurable weights at indicator and dimension level depending on evaluation context.

Canonical indicator catalogue

The full, live and updated list of indicators is maintained on the ONE Framework page.

Open the ONE Framework catalogueThis documentation page focuses on architecture and method reference to avoid duplication and drift.

Handouts & Quick Guides

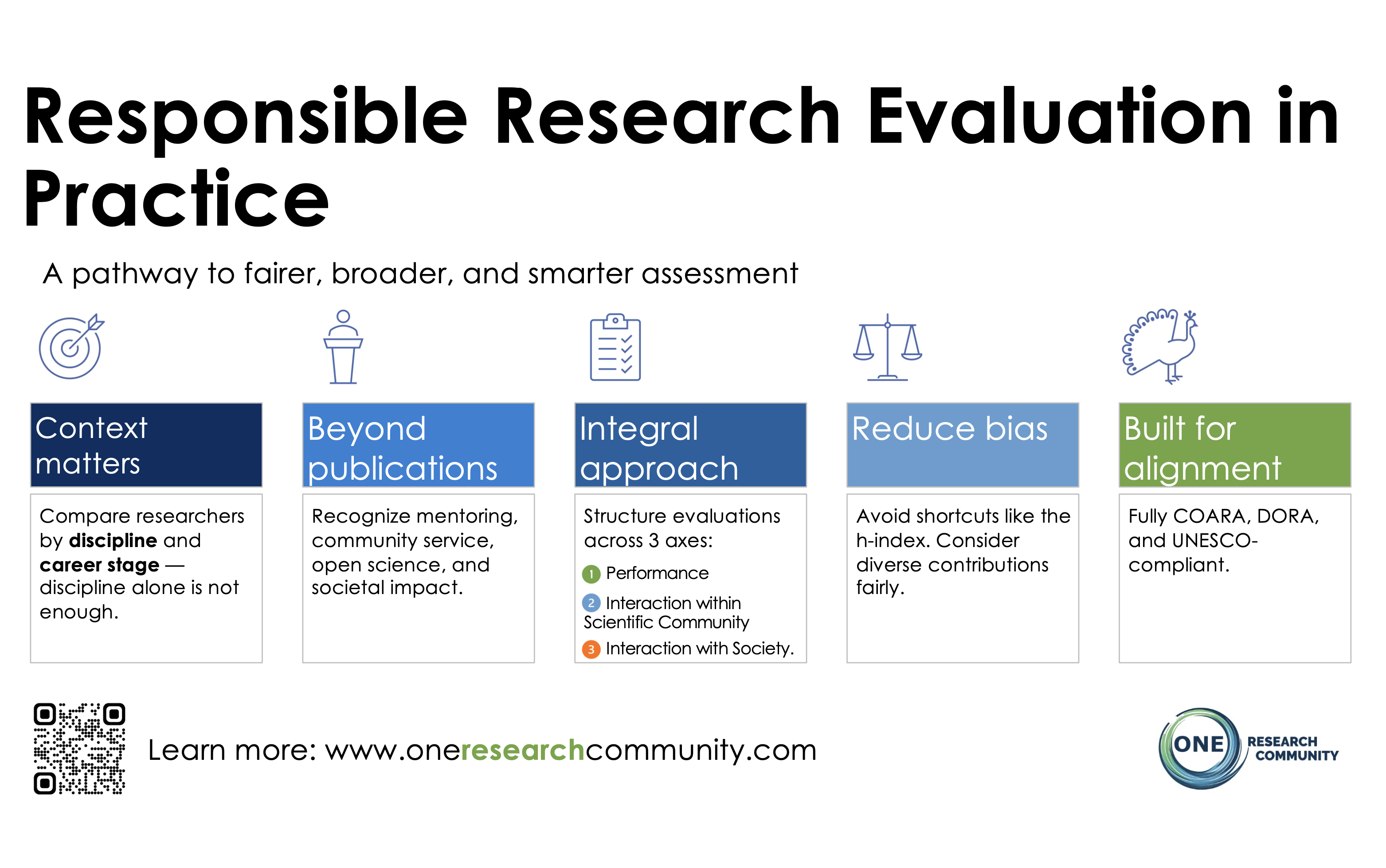

Fair, Inclusive & Smarter Evaluation

Discover how the ONE Framework redefines research assessment beyond outdated metrics.

ONE Framework

Get to know our integral, fair & transparent research assessment: 3 axes, 11 dimensions & 70+ indicators behind the ONE Index.

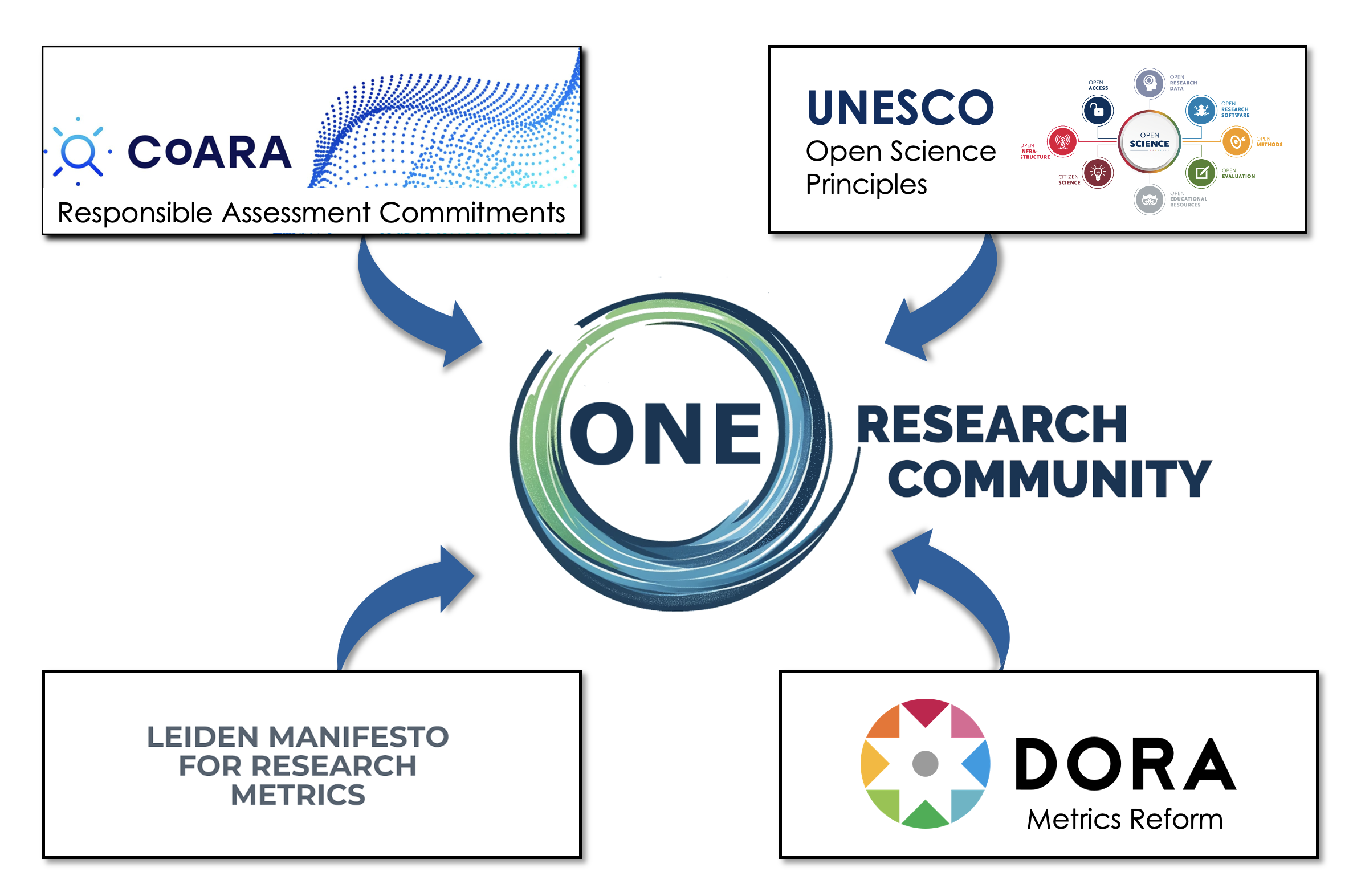

From Principles to Practice: ONE’s Global Alignment

ONE integrates principles of COARA, UNESCO, DORA, and the Leiden Manifesto into a single operational framework.

Badges & Recognition

TL;DR

- Badges make profile information visible and interpretable.

- They reflect system status, declared roles, professional development, or public commitments.

- Badges are optional and not rankings or quality scores.

- Most badges are unlocked by completing profile sections or accepting pledges.

- Badges help others quickly understand who you are, what you do, and what you stand for.

Badges in ONE Research Community highlight profile status, declared roles, professional development, and public commitments. They are designed to make profile information more visible and interpretable, promote transparency and responsible research practices, and encourage meaningful profile completion.

Badges are optional and are not rankings or quality scores.

What badges are (and are not)

Badges are:

- Signals derived from declared information, system status, or public commitments.

- Visible markers that help others understand a researcher’s profile at a glance.

Badges are not:

- Performance evaluations.

- Quality rankings.

- Endorsements by ONE or third parties.

Badge categories

Badges are grouped into four categories, each with a distinct meaning:

- Profile status – System-recognised states related to profile identity and trust.

- Commitments & transparency – Voluntary public commitments and contextual information.

- Roles & contributions – Declared roles and activities (peer review, mentoring, advising, entrepreneurship).

- Development & profile completeness – Signals related to structured self-reflection and competence-based development.

Badge examples

Below are examples of badges available in ONE Research Community. Some badges may appear greyed out until the corresponding profile information or commitment is completed.

Claimed Profile

System status

Referred Profile

Peer recognition

Validated Profile

Higher peer validation

Trusted Contributor

Sustained contribution

Integrity Pledge

Public commitment

Zero Tolerance

Public commitment

Publication Record Validated

Data stewardship

Affiliations Validated

Data stewardship

Inclusion Supporter

Community support

Career Interruption Aware

Context & transparency

Registered Reviewer

Declared role

Institutional Ambassador

Institutional engagement

Declared Mentor

Declared role

Declared Advisor

Declared role

Entrepreneur

Declared activity

Competence Profile

Structured self-assessment

Badge list

Profile status

- Claimed Profile – Profile has been claimed and confirmed by the researcher.

- Referred Profile – Profile has been recognised by peers within the community.

- Validated Profile – Profile has reached a higher level of peer validation.

- Trusted Contributor – Recognised for sustained positive contributions to the research community.

Commitments & transparency

- Integrity Pledge – Public commitment to responsible research practices and ethical conduct.

- Zero Tolerance – Commitment to zero tolerance for harassment, discrimination, and abuse in research environments.

- Publication Record Validated – Researcher completed a publication review flow; validation remains current.

- Affiliations Validated – Researcher completed an affiliation review flow for the common affiliations block; validation remains current.

- Inclusion Supporter – Reports activities supporting inclusion, mentoring, and career development of others.

- Career Interruption Aware – Provides contextual information on interruptions to support fair evaluation.

Roles & contributions

- Registered Reviewer – Declares availability and eligibility to participate in peer review.

- Institutional Ambassador – Recognised by the institutional ambassador logic as a strong candidate to support delegations, partnerships, and external representation.

- Declared Mentor – Declares mentoring or supervision of early-career researchers.

- Declared Advisor – Declares advisory or senior guidance roles.

- Entrepreneur – Declares entrepreneurial or venture-related activity.

Development & profile completeness

- Competence Profile – Indicates completion of a structured competence self-assessment.

How badges are obtained

- Claiming and completing profile sections.

- Submitting specific forms (e.g. reviewer preferences, competences).

- Accepting public pledges.

- Reporting relevant professional activities.

Data sources & transparency

Badge states are derived from structured profile data entered by the researcher, system events (such as profile claiming), and publicly accepted commitments. The underlying data remains accessible and editable by the profile owner.

Badges reflect declared information, public commitments, or system status. They are not rankings or quality assessments.